Natural Language Processing (NLP) Platforms Market Size, Share & Trends Analysis Report by Component (Core NLP Engine (Tokenization, Parsing), Pre-trained Models & Model Zoo, Training & Fine-tuning Modules, Inference / Serving Infrastructure, Embeddings & Semantic Search Modules, Conversational / Dialogue Management, Analytics, Visualization & Explainability, Data Labeling & Annotation Tools, Others), Deployment Mode, Technology, Functionality, Integration, Organization Size, Application/ Use Case, Industry Vertical and Geography (North America, Europe, Asia Pacific, Middle East, Africa, and South America) – Global Industry Data, Trends, and Forecasts, 2026–2035

|

Market Structure & Evolution |

|

|

Segmental Data Insights |

|

|

Demand Trends |

|

|

Competitive Landscape |

|

|

Strategic Development |

|

|

Future Outlook & Opportunities |

|

Natural Language Processing (NLP) Platforms Market Size, Share, and Growth

The global natural language processing (NLP) platforms market is experiencing robust growth, with its estimated value of USD 31.2 billion in the year 2025 and USD 276.9 billion by the period 2035, registering a CAGR of 24.4% during the forecast period.

Stefan Toth, Executive Director of Systems Engineering, Global Technology Services at Verizon, said, "Our AI‑enabled NLP platform is a major source of power for engineers as it automates the monotonous activities which the engineers have to do on their own and thus, they become free to take up complex and high-value scenarios. We are able to achieve the goals of operational efficiency, remodeling of workflows, and fast-tracking of digital transformation through the use of cutting-edge language understanding and intelligent automation."

The global natural language processing (NLP) platforms market is witnessing a remarkable expansion, which is influenced by a variety of factors that contribute to the rapid uptake of the technology. For instance, in October 2025, OpenAI introduced a sophisticated natural language processing model that provides real-time multilingual text comprehension and context-aware AI-generated responses, thus allowing companies to improve customer engagement and to automate content workflows.

By the day, unstructured data is piling up, the need for AI customer support is skyrocketing, and the demand for smart document processing has made the adoption of NLP platforms the only viable choice. A recent example of this trend is the update made in September 2025 to Google's Cloud Vertex AI language, which equips businesses to develop domain-specific NLP applications that will handle their data in a scalable and efficient way.

Strict requirements in terms of regulation and compliance concerning data privacy and accuracy in areas like healthcare, finance, and legal are causing enterprises to invest in aggressive NLP solutions. Advanced technology, industry regulations, and the complexity of data are among the main factors that fuel the NLP platform market, which in turn leads to better decision-making, higher operational efficiency, and enhanced customer experiences.

Moreover, the natural language processing market has some adjacent opportunities worth mentioning that include AI-powered sentiment analysis, conversational AI, automated transcription services, knowledge management solutions, and real-time language translation tools. By taking advantage of these adjacent areas, providers can broaden their offerings, improve enterprise productivity, and open up new revenue streams in AI-driven text and speech analytics.

Natural Language Processing (NLP) Platforms Market Dynamics and Trends

Driver: Increasing Adoption Driven by AI, Cloud, and Multilingual Capabilities Driving Natural Language Processing Platforms Adoption

-

The worldwide natural language processing platforms market is just one of a rapidly changing few and is mainly driven by the increasing use of artificial intelligence and machine learning in industries like healthcare, finance, retail, and education, where text analysis, predictive insights, and automated customer engagement are highly sought after. OpenAI, in October 2025, released domain‑specific language models with enhanced capabilities, thus enabling enterprises to automate document workflows, analyze customer feedback, and improve multilingual support.

- Cloud-based natural language processing solutions along with scalable APIs are gradually removing the entry barriers that small and medium-sized businesses faced thus enabling them to adopt these technologies beyond large enterprises. Google Cloud’s Vertex AI language tools, which got updated in September 2025, are an example of this trend as they allow companies to build customized natural language processing applications in an efficient manner.

- The increase in digital communication, globalization of businesses, and multilingual requirements are some of the factors that have further escalated the demand for natural language processing platforms, thus pushing enterprises to seek solutions that can provide them with real-time insights, enhance their operational efficiency and improve customer experiences.

Restraint: Data Privacy, Integration Complexity, and Model Biasness Limiting Driving Natural Language Processing Platforms Scaleup

-

In spite of the impressive growth, the use of natural language processing platforms is still confronted with difficulties caused by data privacy and compliance requirements, mainly in tightly regulated sectors such as healthcare, finance, and legal services. Outdated IT systems, fragmented data pipelines, and integration complexities continue to be the main reasons for the deployment slowdowns in most cases of organizations that do not have a modern data infrastructure, particularly those that lack it.

- Moreover, there are still some issues of explainability and ethics that are associated with advanced transformer-based and generative AI models, which in turn cause a very limited number of highly regulated environments to have access to them. The existence of high implementation and maintenance costs such as those for cloud infrastructure, specialized talent, and continuous model fine-tuning, are some of the reasons why less powerful businesses are still far from enjoying the benefits of advanced natural language processing solutions.

- The problems with model generalization, low-resource languages, and the biasing of the outputs, which is primarily unintentional, are also some of the reasons why adoption in diverse and global markets is limited.

Opportunity: Domain-Specific NLP, Multilingual Expansion, and New Verticals

-

There are significant potentials for domain-specific natural language processing (NLP) solutions such as analyzing clinical documents, reviewing contracts, monitoring financial compliance, and automating customer support. The growth of non-English speaking and emerging markets gives the multilingual natural language processing platform space as companies will increasingly demand localized solutions.

- The use of natural language processing to interact with the speech, voice, and multimodal AI solutions opens up more ways to innovate, thus allowing new products in conversational AI, transcription, and real-time translation. The increased utilization of natural language processing in knowledge management, market intelligence, and AI-driven decision support is a source of great-value opportunities for enterprises that are striving to gain a competitive advantage.

- The cooperation between natural language processing vendors and cloud providers and SaaS integration is resulting in the creation of ecosystems that facilitate deployment and shorten the time-to-value for customers.

Key Trend: Generative AI, Explainability, Privacy-Preserving NLP, and Real-Time Insights

-

Advanced language models such as Generative AI and transformer-based models along with retrieval-augmented and domain-adapted architectures are main factors behind the rise of more versatile and accurate natural language processing platforms. To provide transparency, trust, and compliance in sensitive industries, explainable AI (XAI) and privacy-preserving natural language processing approaches are becoming popular.

- Hybrid deployment models that merge cloud, edge, and on-premises solutions are being developed to meet scalability, latency, and privacy needs of enterprise natural language processing applications. As a result, real-time analytics and AI-driven customer insights are turning into the main trends while NLP is supporting the evolution of dynamic content generation, chatbots, and intelligent automation.

- Because of the convergence of natural language processing with knowledge graphs, semantic search, and AI-powered recommendation engines, companies are making smarter decisions and providing more contextualized experiences to their users.

Natural-Language-Processing-Platforms-Market Analysis and Segmental Data

“In Deployment Mode, Cloud-Based Dominates Global Natural Language Processing (NLP) Platforms Market"

-

The cloud-based deployment model is the major contributing factor to the global natural language processing platforms market. This is due to the fact that cloud-native natural language processing solutions provide scalability, flexibility, and require less infrastructure investment, thus making advanced language AI available to enterprises of all sizes.

- As digital transformation is getting faster and is affecting more and more industries - customer service, healthcare, and finance sectors, e-commerce, and content generation - companies are turning to cloud-deployed natural language processing to be able to cope with their huge volumes of unstructured text, to automate workflows, and to facilitate real-time analytics and conversational AI.

- Just to illustrate: in 2025, a major cloud - natural language processing provider introduced generative-AI based natural-language query generation for log-data and analytics - thus, allowing enterprises to do complex data queries by using simple language prompts instead of code, which is an example of how cloud natural language processing services are becoming more user-friendly and thus speeding up time-to-value.

“North America Leads the Natural Language Processing (NLP) Platforms Market"

-

North America is the largest contributor to the overall global natural language processing platform market. This has been made possible by the numerous cloud service providers, AI research laboratories, and technology-oriented companies that are active in the areas of healthcare, BFSI, retail, and telecommunications. The region is a beneficiary of AI and machine learning technologies that were embraced at an early stage, digitally mature, and with an enterprise infrastructure that is very sound and can be easily scaled across customer analytics, document processing, and conversational AI applications.

- On the other hand, rules and regulations that govern industries such as HIPAA, state-level data protection laws and compliance requirements across healthcare and financial sectors can be perceived as enablers of natural language processing platform adoption. Regulatory measures impose requirements for the deployment of natural language processing solutions that have better explainability, AI behavior that is easier to audit, and increased data-governance capabilities.

- North American leadership in the market is also a result of cloud-based platforms in the United States that are committing massive investments to integrate natural language processing with enterprise suites, thus enabling advanced functions including sentiment analysis, automated speech recognition, content generation, and intelligent process automation. Also, research advancements from top universities and AI labs help to fuel continuous innovation in large language models, multimodal natural language processing, and domain-specific model fine-tuning.

- Moreover, the extensive use of natural language processing in such applications as virtual assistants, fraud detection, patient engagement, and omnichannel customer experience has been a key factor in the dominance of North America. Enterprises across industries are increasingly utilizing natural language processing to automate knowledge extraction, improve customer support, and increase operational efficiency, hence making the region a global hub for natural language processing platform adoption and innovation.

Natural-Language-Processing-Platforms-Market Ecosystem

The natural language processing (NLP) platforms market is increasingly dominated and consolidated by a few major technology vendors, OpenAI, Google, Microsoft, Amazon Web Services, Hugging Face, and IBM, whose sophisticated transformer architectures, large language models, and cloud-scale inference engines constitute the primary means of enterprise deployments. These companies fuel the innovation in the field through the niche and specialized products they offer: domain-adapted LLMs for legal and healthcare documentation, speech-to-text engines for contact centers, retrieval-augmented generation (RAG) tooling for knowledge retrieval, and semantic-search/knowledge-graph integrations that accelerate contextual understanding.

Moreover, Government bodies, research institutions, and R&D labs are major contributors to the market evolution. For instance, in July 2025, the U.S. National Institutes of Health, after issuing AI guidance, emphasized the need for explainability and reproducibility in health-oriented NLP research, which in turn is affecting vendor roadmaps leading to auditable models. Also in April 2025, OpenAI initiated a program to promote domain-specific benchmarks and tooling, thereby enabling more accurate, task-aligned model evaluation and enterprise readiness. The most recent institutional study (October 2025) pointed out that the real-world NLP integration in systematic review screening led to the increased screening efficiency while the accuracy was maintained, thus providing measurable operational gains.

Owing to which, the main players are heavily invested in product diversification-incorporating multimodal functionalities, cloud and hybrid deployment options, and managed services, to be able to provide scalable, compliant, and productivity-enhancing NLP solutions to enterprise customers.

Recent Development and Strategic Overview:

-

In July 2025, Hugging Face released Sentence Transformers v5.0, which included the advent of sparse-embedding models with representations of more than 30,000 dimensions and less than 1% non-zero entries - thus greatly changing hybrid search and semantic retrieval to be very fast and scalable. With this update, companies are given the freedom to establish top-performing, large-scale solutions for document similarity, search, and semantic-search, while at the same time advanced NLP becomes available even for environments that have limited resources.

- In September 2025, Qwen (a project supported by a leading Chinese AI lab) unveiled Qwen3-Next, a hybrid-attention, mixture-of-experts (MoE) architecture model, which achieves more than 10× higher inference throughput for long-context tasks while the training cost is halved, thus allowing enterprises to use efficient large-context NLP for document analysis, legal review, and other text-heavy tasks of high volume.

Report Scope

|

Attribute |

Detail |

|

Market Size in 2025 |

USD 31.2 Bn |

|

Market Forecast Value in 2035 |

USD 276.9 Bn |

|

Growth Rate (CAGR) |

24.4% |

|

Forecast Period |

2026 – 2035 |

|

Historical Data Available for |

2021 – 2024 |

|

Market Size Units |

USD Bn for Value |

|

Report Format |

Electronic (PDF) + Excel |

|

Regions and Countries Covered |

|||||

|

North America |

Europe |

Asia Pacific |

Middle East |

Africa |

South America |

|

|

|

|

|

|

|

Companies Covered |

|||||

|

|

|

|

|

|

Natural-Language-Processing-Platforms-Market Segmentation and Highlights

|

Segment |

Sub-segment |

|

Natural Language Processing (NLP) Platforms Market, By Component |

|

|

Natural Language Processing (NLP) Platforms Market, By Deployment Mode |

|

|

Natural Language Processing (NLP) Platforms Market By Technology |

|

|

Natural Language Processing (NLP) Platforms Market, By Functionality |

|

|

Natural Language Processing (NLP) Platforms Market, By Integration |

|

|

Natural Language Processing (NLP) Platforms Market, By Organization Size |

|

|

Natural Language Processing (NLP) Platforms Market, By Application/ Use Case |

|

|

Natural Language Processing (NLP) Platforms Market, By Industry Vertical |

|

Frequently Asked Questions

Table of Contents

- 1. Research Methodology and Assumptions

- 1.1. Definitions

- 1.2. Research Design and Approach

- 1.3. Data Collection Methods

- 1.4. Base Estimates and Calculations

- 1.5. Forecasting Models

- 1.5.1. Key Forecast Factors & Impact Analysis

- 1.6. Secondary Research

- 1.6.1. Open Sources

- 1.6.2. Paid Databases

- 1.6.3. Associations

- 1.7. Primary Research

- 1.7.1. Primary Sources

- 1.7.2. Primary Interviews with Stakeholders across Ecosystem

- 2. Executive Summary

- 2.1. Global Natural Language Processing (NLP) Platforms Market Outlook

- 2.1.1. Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), and Forecasts, 2021-2035

- 2.1.2. Compounded Annual Growth Rate Analysis

- 2.1.3. Growth Opportunity Analysis

- 2.1.4. Segmental Share Analysis

- 2.1.5. Geographical Share Analysis

- 2.2. Market Analysis and Facts

- 2.3. Supply-Demand Analysis

- 2.4. Competitive Benchmarking

- 2.5. Go-to- Market Strategy

- 2.5.1. Customer/ End-use Industry Assessment

- 2.5.2. Growth Opportunity Data, 2026-2035

- 2.5.2.1. Regional Data

- 2.5.2.2. Country Data

- 2.5.2.3. Segmental Data

- 2.5.3. Identification of Potential Market Spaces

- 2.5.4. GAP Analysis

- 2.5.5. Potential Attractive Price Points

- 2.5.6. Prevailing Market Risks & Challenges

- 2.5.7. Preferred Sales & Marketing Strategies

- 2.5.8. Key Recommendations and Analysis

- 2.5.9. A Way Forward

- 2.1. Global Natural Language Processing (NLP) Platforms Market Outlook

- 3. Industry Data and Premium Insights

- 3.1. Global Information Technology & Media Ecosystem Overview, 2025

- 3.1.1. Information Technology & Media Industry Analysis

- 3.1.2. Key Trends for Information Technology & Media Industry

- 3.1.3. Regional Distribution for Information Technology & Media Industry

- 3.2. Supplier Customer Data

- 3.3. Technology Roadmap and Developments

- 3.1. Global Information Technology & Media Ecosystem Overview, 2025

- 4. Market Overview

- 4.1. Market Dynamics

- 4.1.1. Drivers

- 4.1.1.1. Rising demand for automated text processing, sentiment analysis, and conversational AI to streamline customer interactions and enterprise workflows.

- 4.1.1.2. Growing adoption of large language models (LLMs) for summarization, translation, and contextual content generation.

- 4.1.1.3. Increasing investment in cloud-based NLP platforms, enterprise AI governance tools, and multilingual model deployment.

- 4.1.2. Restraints

- 4.1.2.1. High computational costs of training and inference for advanced LLM-driven NLP applications.

- 4.1.2.2. Challenges integrating NLP systems with legacy IT, proprietary datasets, and industry-specific compliance frameworks.

- 4.1.1. Drivers

- 4.2. Key Trend Analysis

- 4.3. Regulatory Framework

- 4.3.1. Key Regulations, Norms, and Subsidies, by Key Countries

- 4.3.2. Tariffs and Standards

- 4.3.3. Impact Analysis of Regulations on the Market

- 4.4. Value Chain Analysis

- 4.4.1. Data Collection, Annotation & Model Training Providers

- 4.4.2. System Integrators/ Technology Providers

- 4.4.3. NLP Platform Providers

- 4.4.4. End Users

- 4.5. Cost Structure Analysis

- 4.5.1. Parameter’s Share for Cost Associated

- 4.5.2. COGP vs COGS

- 4.5.3. Profit Margin Analysis

- 4.6. Pricing Analysis

- 4.6.1. Regional Pricing Analysis

- 4.6.2. Segmental Pricing Trends

- 4.6.3. Factors Influencing Pricing

- 4.7. Porter’s Five Forces Analysis

- 4.8. PESTEL Analysis

- 4.9. Global Natural Language Processing (NLP) Platforms Market Demand

- 4.9.1. Historical Market Size –Value (US$ Bn), 2020-2024

- 4.9.2. Current and Future Market Size –Value (US$ Bn), 2026–2035

- 4.9.2.1. Y-o-Y Growth Trends

- 4.9.2.2. Absolute $ Opportunity Assessment

- 4.1. Market Dynamics

- 5. Competition Landscape

- 5.1. Competition structure

- 5.1.1. Fragmented v/s consolidated

- 5.2. Company Share Analysis, 2025

- 5.2.1. Global Company Market Share

- 5.2.2. By Region

- 5.2.2.1. North America

- 5.2.2.2. Europe

- 5.2.2.3. Asia Pacific

- 5.2.2.4. Middle East

- 5.2.2.5. Africa

- 5.2.2.6. South America

- 5.3. Product Comparison Matrix

- 5.3.1. Specifications

- 5.3.2. Market Positioning

- 5.3.3. Pricing

- 5.1. Competition structure

- 6. Global Natural Language Processing (NLP) Platforms Market Analysis, by Component

- 6.1. Key Segment Analysis

- 6.2. Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, by Component, 2021-2035

- 6.2.1. Core NLP Engine (tokenization, parsing)

- 6.2.2. Pre-trained Models & Model Zoo

- 6.2.3. Training & Fine-tuning Modules

- 6.2.4. Inference / Serving Infrastructure

- 6.2.5. Embeddings & Semantic Search Modules

- 6.2.6. Conversational / Dialogue Management

- 6.2.7. Analytics, Visualization & Explainability

- 6.2.8. Data Labeling & Annotation Tools

- 6.2.9. Others

- 7. Global Natural Language Processing (NLP) Platforms Market Analysis, by Deployment Mode

- 7.1. Key Segment Analysis

- 7.2. Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, by Deployment Mode, 2021-2035

- 7.2.1. Cloud-Based

- 7.2.2. On-Premises

- 7.2.3. Hybrid

- 8. Global Natural Language Processing (NLP) Platforms Market Analysis, by Technology

- 8.1. Key Segment Analysis

- 8.2. Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, by Technology, 2021-2035

- 8.2.1. Transformer-based Large Language Models (LLMs)

- 8.2.2. Lightweight / Edge NLP Models (distilled, quantized)

- 8.2.3. Rule-based / Symbolic NLP Engines

- 8.2.4. Retrieval-Augmented Generation (RAG) Frameworks

- 8.2.5. Hybrid (neuro-symbolic) Approaches

- 8.2.6. Multimodal NLP (text+vision+audio)

- 8.2.7. Others

- 9. Global Natural Language Processing (NLP) Platforms Market Analysis, by Functionality

- 9.1. Key Segment Analysis

- 9.2. Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, by Functionality, 2021-2035

- 9.2.1. Text Classification & Tagging

- 9.2.2. Named Entity Recognition (NER)

- 9.2.3. Sentiment & Emotion Analysis

- 9.2.4. Machine Translation & Localization

- 9.2.5. Summarization (abstractive & extractive)

- 9.2.6. Question Answering & Semantic Search

- 9.2.7. Intent Detection & Slot-filling

- 9.2.8. Text Generation & Creative Writing

- 9.2.9. Others

- 10. Global Natural Language Processing (NLP) Platforms Market Analysis, by Integration

- 10.1. Key Segment Analysis

- 10.2. Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, by Integration, 2021-2035

- 10.2.1. Cloud Provider-native Integrations (AWS/GCP/Azure)

- 10.2.2. Data Platform & Lake Integrations (Snowflake, Databricks)

- 10.2.3. CRM/ERP/Contact Center Integrations (Salesforce, SAP)

- 10.2.4. Messaging Channels & Voice Platform Connectors

- 10.2.5. Vector DB & Search Engine Integrations

- 10.2.6. Others

- 11. Global Natural Language Processing (NLP) Platforms Market Analysis, by Organization Size

- 11.1. Key Segment Analysis

- 11.2. Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, by Organization Size, 2021-2035

- 11.2.1. Large Enterprises

- 11.2.2. Small & Medium Enterprises (SMEs)

- 12. Global Natural Language Processing (NLP) Platforms Market Analysis, by Application/ Use Case

- 12.1. Key Segment Analysis

- 12.2. Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, by Application/ Use Case, 2021-2035

- 12.2.1. Conversational Agents & Virtual Assistants

- 12.2.2. Customer Support Automation (ticketing, routing)

- 12.2.3. Knowledge Management & Semantic Search

- 12.2.4. Content Generation & Marketing Automation

- 12.2.5. Compliance Monitoring & Contract Analytics

- 12.2.6. Voice-to-Text / Speech-enabled NLP Workflows

- 12.2.7. Clinical / Healthcare Text Mining

- 12.2.8. Legal & Contract Intelligence

- 12.2.9. Others

- 13. Global Natural Language Processing (NLP) Platforms Market Analysis, by Industry Vertical

- 13.1. Key Segment Analysis

- 13.2. Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, by Industry Vertical, 2021-2035

- 13.2.1. IT & Telecom

- 13.2.2. Financial Services & Banking

- 13.2.3. Healthcare & Life Sciences

- 13.2.4. Retail & E-commerce

- 13.2.5. Media & Entertainment

- 13.2.6. Government & Public Sector

- 13.2.7. Legal Services

- 13.2.8. Education & EdTech

- 13.2.9. Others

- 14. Global Natural Language Processing (NLP) Platforms Market Analysis and Forecasts, by Region

- 14.1. Key Findings

- 14.2. Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, by Region, 2021-2035

- 14.2.1. North America

- 14.2.2. Europe

- 14.2.3. Asia Pacific

- 14.2.4. Middle East

- 14.2.5. Africa

- 14.2.6. South America

- 15. North America Natural Language Processing (NLP) Platforms Market Analysis

- 15.1. Key Segment Analysis

- 15.2. Regional Snapshot

- 15.3. North America Natural Language Processing (NLP) Platforms Market Size Value - US$ Bn), Analysis, and Forecasts, 2021-2035

- 15.3.1. Component

- 15.3.2. Deployment Mode

- 15.3.3. Technology

- 15.3.4. Functionality

- 15.3.5. Integration

- 15.3.6. Organization Size

- 15.3.7. Application/ Use Case

- 15.3.8. Industry Vertical

- 15.3.9. Country

- 15.3.9.1. USA

- 15.3.9.2. Canada

- 15.3.9.3. Mexico

- 15.4. USA Natural Language Processing (NLP) Platforms Market

- 15.4.1. Country Segmental Analysis

- 15.4.2. Component

- 15.4.3. Deployment Mode

- 15.4.4. Technology

- 15.4.5. Functionality

- 15.4.6. Integration

- 15.4.7. Organization Size

- 15.4.8. Application/ Use Case

- 15.4.9. Industry Vertical

- 15.5. Canada Natural Language Processing (NLP) Platforms Market

- 15.5.1. Country Segmental Analysis

- 15.5.2. Component

- 15.5.3. Deployment Mode

- 15.5.4. Technology

- 15.5.5. Functionality

- 15.5.6. Integration

- 15.5.7. Organization Size

- 15.5.8. Application/ Use Case

- 15.5.9. Industry Vertical

- 15.6. Mexico Natural Language Processing (NLP) Platforms Market

- 15.6.1. Country Segmental Analysis

- 15.6.2. Component

- 15.6.3. Deployment Mode

- 15.6.4. Technology

- 15.6.5. Functionality

- 15.6.6. Integration

- 15.6.7. Organization Size

- 15.6.8. Application/ Use Case

- 15.6.9. Industry Vertical

- 16. Europe Natural Language Processing (NLP) Platforms Market Analysis

- 16.1. Key Segment Analysis

- 16.2. Regional Snapshot

- 16.3. Europe Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, 2021-2035

- 16.3.1. Component

- 16.3.2. Deployment Mode

- 16.3.3. Technology

- 16.3.4. Functionality

- 16.3.5. Integration

- 16.3.6. Organization Size

- 16.3.7. Application/ Use Case

- 16.3.8. Industry Vertical

- 16.3.9. Country

- 16.3.9.1. Germany

- 16.3.9.2. United Kingdom

- 16.3.9.3. France

- 16.3.9.4. Italy

- 16.3.9.5. Spain

- 16.3.9.6. Netherlands

- 16.3.9.7. Nordic Countries

- 16.3.9.8. Poland

- 16.3.9.9. Russia & CIS

- 16.3.9.10. Rest of Europe

- 16.4. Germany Natural Language Processing (NLP) Platforms Market

- 16.4.1. Country Segmental Analysis

- 16.4.2. Component

- 16.4.3. Deployment Mode

- 16.4.4. Technology

- 16.4.5. Functionality

- 16.4.6. Integration

- 16.4.7. Organization Size

- 16.4.8. Application/ Use Case

- 16.4.9. Industry Vertical

- 16.5. United Kingdom Natural Language Processing (NLP) Platforms Market

- 16.5.1. Country Segmental Analysis

- 16.5.2. Component

- 16.5.3. Deployment Mode

- 16.5.4. Technology

- 16.5.5. Functionality

- 16.5.6. Integration

- 16.5.7. Organization Size

- 16.5.8. Application/ Use Case

- 16.5.9. Industry Vertical

- 16.6. France Natural Language Processing (NLP) Platforms Market

- 16.6.1. Country Segmental Analysis

- 16.6.2. Component

- 16.6.3. Deployment Mode

- 16.6.4. Technology

- 16.6.5. Functionality

- 16.6.6. Integration

- 16.6.7. Organization Size

- 16.6.8. Application/ Use Case

- 16.6.9. Industry Vertical

- 16.7. Italy Natural Language Processing (NLP) Platforms Market

- 16.7.1. Country Segmental Analysis

- 16.7.2. Component

- 16.7.3. Deployment Mode

- 16.7.4. Technology

- 16.7.5. Functionality

- 16.7.6. Integration

- 16.7.7. Organization Size

- 16.7.8. Application/ Use Case

- 16.7.9. Industry Vertical

- 16.8. Spain Natural Language Processing (NLP) Platforms Market

- 16.8.1. Country Segmental Analysis

- 16.8.2. Component

- 16.8.3. Deployment Mode

- 16.8.4. Technology

- 16.8.5. Functionality

- 16.8.6. Integration

- 16.8.7. Organization Size

- 16.8.8. Application/ Use Case

- 16.8.9. Industry Vertical

- 16.9. Netherlands Natural Language Processing (NLP) Platforms Market

- 16.9.1. Country Segmental Analysis

- 16.9.2. Component

- 16.9.3. Deployment Mode

- 16.9.4. Technology

- 16.9.5. Functionality

- 16.9.6. Integration

- 16.9.7. Organization Size

- 16.9.8. Application/ Use Case

- 16.9.9. Industry Vertical

- 16.10. Nordic Countries Natural Language Processing (NLP) Platforms Market

- 16.10.1. Country Segmental Analysis

- 16.10.2. Component

- 16.10.3. Deployment Mode

- 16.10.4. Technology

- 16.10.5. Functionality

- 16.10.6. Integration

- 16.10.7. Organization Size

- 16.10.8. Application/ Use Case

- 16.10.9. Industry Vertical

- 16.11. Poland Natural Language Processing (NLP) Platforms Market

- 16.11.1. Country Segmental Analysis

- 16.11.2. Component

- 16.11.3. Deployment Mode

- 16.11.4. Technology

- 16.11.5. Functionality

- 16.11.6. Integration

- 16.11.7. Organization Size

- 16.11.8. Application/ Use Case

- 16.11.9. Industry Vertical

- 16.12. Russia & CIS Natural Language Processing (NLP) Platforms Market

- 16.12.1. Country Segmental Analysis

- 16.12.2. Component

- 16.12.3. Deployment Mode

- 16.12.4. Technology

- 16.12.5. Functionality

- 16.12.6. Integration

- 16.12.7. Organization Size

- 16.12.8. Application/ Use Case

- 16.12.9. Industry Vertical

- 16.13. Rest of Europe Natural Language Processing (NLP) Platforms Market

- 16.13.1. Country Segmental Analysis

- 16.13.2. Component

- 16.13.3. Deployment Mode

- 16.13.4. Technology

- 16.13.5. Functionality

- 16.13.6. Integration

- 16.13.7. Organization Size

- 16.13.8. Application/ Use Case

- 16.13.9. Industry Vertical

- 17. Asia Pacific Natural Language Processing (NLP) Platforms Market Analysis

- 17.1. Key Segment Analysis

- 17.2. Regional Snapshot

- 17.3. Asia Pacific Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, 2021-2035

- 17.3.1. Component

- 17.3.2. Deployment Mode

- 17.3.3. Technology

- 17.3.4. Functionality

- 17.3.5. Integration

- 17.3.6. Organization Size

- 17.3.7. Application/ Use Case

- 17.3.8. Industry Vertical

- 17.3.9. Country

- 17.3.9.1. China

- 17.3.9.2. India

- 17.3.9.3. Japan

- 17.3.9.4. South Korea

- 17.3.9.5. Australia and New Zealand

- 17.3.9.6. Indonesia

- 17.3.9.7. Malaysia

- 17.3.9.8. Thailand

- 17.3.9.9. Vietnam

- 17.3.9.10. Rest of Asia Pacific

- 17.4. China Natural Language Processing (NLP) Platforms Market

- 17.4.1. Country Segmental Analysis

- 17.4.2. Component

- 17.4.3. Deployment Mode

- 17.4.4. Technology

- 17.4.5. Functionality

- 17.4.6. Integration

- 17.4.7. Organization Size

- 17.4.8. Application/ Use Case

- 17.4.9. Industry Vertical

- 17.5. India Natural Language Processing (NLP) Platforms Market

- 17.5.1. Country Segmental Analysis

- 17.5.2. Component

- 17.5.3. Deployment Mode

- 17.5.4. Technology

- 17.5.5. Functionality

- 17.5.6. Integration

- 17.5.7. Organization Size

- 17.5.8. Application/ Use Case

- 17.5.9. Industry Vertical

- 17.6. Japan Natural Language Processing (NLP) Platforms Market

- 17.6.1. Country Segmental Analysis

- 17.6.2. Component

- 17.6.3. Deployment Mode

- 17.6.4. Technology

- 17.6.5. Functionality

- 17.6.6. Integration

- 17.6.7. Organization Size

- 17.6.8. Application/ Use Case

- 17.6.9. Industry Vertical

- 17.7. South Korea Natural Language Processing (NLP) Platforms Market

- 17.7.1. Country Segmental Analysis

- 17.7.2. Component

- 17.7.3. Deployment Mode

- 17.7.4. Technology

- 17.7.5. Functionality

- 17.7.6. Integration

- 17.7.7. Organization Size

- 17.7.8. Application/ Use Case

- 17.7.9. Industry Vertical

- 17.8. Australia and New Zealand Natural Language Processing (NLP) Platforms Market

- 17.8.1. Country Segmental Analysis

- 17.8.2. Component

- 17.8.3. Deployment Mode

- 17.8.4. Technology

- 17.8.5. Functionality

- 17.8.6. Integration

- 17.8.7. Organization Size

- 17.8.8. Application/ Use Case

- 17.8.9. Industry Vertical

- 17.9. Indonesia Natural Language Processing (NLP) Platforms Market

- 17.9.1. Country Segmental Analysis

- 17.9.2. Component

- 17.9.3. Deployment Mode

- 17.9.4. Technology

- 17.9.5. Functionality

- 17.9.6. Integration

- 17.9.7. Organization Size

- 17.9.8. Application/ Use Case

- 17.9.9. Industry Vertical

- 17.10. Malaysia Natural Language Processing (NLP) Platforms Market

- 17.10.1. Country Segmental Analysis

- 17.10.2. Component

- 17.10.3. Deployment Mode

- 17.10.4. Technology

- 17.10.5. Functionality

- 17.10.6. Integration

- 17.10.7. Organization Size

- 17.10.8. Application/ Use Case

- 17.10.9. Industry Vertical

- 17.11. Thailand Natural Language Processing (NLP) Platforms Market

- 17.11.1. Country Segmental Analysis

- 17.11.2. Component

- 17.11.3. Deployment Mode

- 17.11.4. Technology

- 17.11.5. Functionality

- 17.11.6. Integration

- 17.11.7. Organization Size

- 17.11.8. Application/ Use Case

- 17.11.9. Industry Vertical

- 17.12. Vietnam Natural Language Processing (NLP) Platforms Market

- 17.12.1. Country Segmental Analysis

- 17.12.2. Component

- 17.12.3. Deployment Mode

- 17.12.4. Technology

- 17.12.5. Functionality

- 17.12.6. Integration

- 17.12.7. Organization Size

- 17.12.8. Application/ Use Case

- 17.12.9. Industry Vertical

- 17.13. Rest of Asia Pacific Natural Language Processing (NLP) Platforms Market

- 17.13.1. Country Segmental Analysis

- 17.13.2. Component

- 17.13.3. Deployment Mode

- 17.13.4. Technology

- 17.13.5. Functionality

- 17.13.6. Integration

- 17.13.7. Organization Size

- 17.13.8. Application/ Use Case

- 17.13.9. Industry Vertical

- 18. Middle East Natural Language Processing (NLP) Platforms Market Analysis

- 18.1. Key Segment Analysis

- 18.2. Regional Snapshot

- 18.3. Middle East Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, 2021-2035

- 18.3.1. Component

- 18.3.2. Deployment Mode

- 18.3.3. Technology

- 18.3.4. Functionality

- 18.3.5. Integration

- 18.3.6. Organization Size

- 18.3.7. Application/ Use Case

- 18.3.8. Industry Vertical

- 18.3.9. Country

- 18.3.9.1. Turkey

- 18.3.9.2. UAE

- 18.3.9.3. Saudi Arabia

- 18.3.9.4. Israel

- 18.3.9.5. Rest of Middle East

- 18.4. Turkey Natural Language Processing (NLP) Platforms Market

- 18.4.1. Country Segmental Analysis

- 18.4.2. Component

- 18.4.3. Deployment Mode

- 18.4.4. Technology

- 18.4.5. Functionality

- 18.4.6. Integration

- 18.4.7. Organization Size

- 18.4.8. Application/ Use Case

- 18.4.9. Industry Vertical

- 18.5. UAE Natural Language Processing (NLP) Platforms Market

- 18.5.1. Country Segmental Analysis

- 18.5.2. Component

- 18.5.3. Deployment Mode

- 18.5.4. Technology

- 18.5.5. Functionality

- 18.5.6. Integration

- 18.5.7. Organization Size

- 18.5.8. Application/ Use Case

- 18.5.9. Industry Vertical

- 18.6. Saudi Arabia Natural Language Processing (NLP) Platforms Market

- 18.6.1. Country Segmental Analysis

- 18.6.2. Component

- 18.6.3. Deployment Mode

- 18.6.4. Technology

- 18.6.5. Functionality

- 18.6.6. Integration

- 18.6.7. Organization Size

- 18.6.8. Application/ Use Case

- 18.6.9. Industry Vertical

- 18.7. Israel Natural Language Processing (NLP) Platforms Market

- 18.7.1. Country Segmental Analysis

- 18.7.2. Component

- 18.7.3. Deployment Mode

- 18.7.4. Technology

- 18.7.5. Functionality

- 18.7.6. Integration

- 18.7.7. Organization Size

- 18.7.8. Application/ Use Case

- 18.7.9. Industry Vertical

- 18.8. Rest of Middle East Natural Language Processing (NLP) Platforms Market

- 18.8.1. Country Segmental Analysis

- 18.8.2. Component

- 18.8.3. Deployment Mode

- 18.8.4. Technology

- 18.8.5. Functionality

- 18.8.6. Integration

- 18.8.7. Organization Size

- 18.8.8. Application/ Use Case

- 18.8.9. Industry Vertical

- 19. Africa Natural Language Processing (NLP) Platforms Market Analysis

- 19.1. Key Segment Analysis

- 19.2. Regional Snapshot

- 19.3. Africa Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, 2021-2035

- 19.3.1. Component

- 19.3.2. Deployment Mode

- 19.3.3. Technology

- 19.3.4. Functionality

- 19.3.5. Integration

- 19.3.6. Organization Size

- 19.3.7. Application/ Use Case

- 19.3.8. Industry Vertical

- 19.3.9. Country

- 19.3.9.1. South Africa

- 19.3.9.2. Egypt

- 19.3.9.3. Nigeria

- 19.3.9.4. Algeria

- 19.3.9.5. Rest of Africa

- 19.4. South Africa Natural Language Processing (NLP) Platforms Market

- 19.4.1. Country Segmental Analysis

- 19.4.2. Component

- 19.4.3. Deployment Mode

- 19.4.4. Technology

- 19.4.5. Functionality

- 19.4.6. Integration

- 19.4.7. Organization Size

- 19.4.8. Application/ Use Case

- 19.4.9. Industry Vertical

- 19.5. Egypt Natural Language Processing (NLP) Platforms Market

- 19.5.1. Country Segmental Analysis

- 19.5.2. Component

- 19.5.3. Deployment Mode

- 19.5.4. Technology

- 19.5.5. Functionality

- 19.5.6. Integration

- 19.5.7. Organization Size

- 19.5.8. Application/ Use Case

- 19.5.9. Industry Vertical

- 19.6. Nigeria Natural Language Processing (NLP) Platforms Market

- 19.6.1. Country Segmental Analysis

- 19.6.2. Component

- 19.6.3. Deployment Mode

- 19.6.4. Technology

- 19.6.5. Functionality

- 19.6.6. Integration

- 19.6.7. Organization Size

- 19.6.8. Application/ Use Case

- 19.6.9. Industry Vertical

- 19.7. Algeria Natural Language Processing (NLP) Platforms Market

- 19.7.1. Country Segmental Analysis

- 19.7.2. Component

- 19.7.3. Deployment Mode

- 19.7.4. Technology

- 19.7.5. Functionality

- 19.7.6. Integration

- 19.7.7. Organization Size

- 19.7.8. Application/ Use Case

- 19.7.9. Industry Vertical

- 19.8. Rest of Africa Natural Language Processing (NLP) Platforms Market

- 19.8.1. Country Segmental Analysis

- 19.8.2. Component

- 19.8.3. Deployment Mode

- 19.8.4. Technology

- 19.8.5. Functionality

- 19.8.6. Integration

- 19.8.7. Organization Size

- 19.8.8. Application/ Use Case

- 19.8.9. Industry Vertical

- 20. South America Natural Language Processing (NLP) Platforms Market Analysis

- 20.1. Key Segment Analysis

- 20.2. Regional Snapshot

- 20.3. South America Natural Language Processing (NLP) Platforms Market Size (Value - US$ Bn), Analysis, and Forecasts, 2021-2035

- 20.3.1. Component

- 20.3.2. Deployment Mode

- 20.3.3. Technology

- 20.3.4. Functionality

- 20.3.5. Integration

- 20.3.6. Organization Size

- 20.3.7. Application/ Use Case

- 20.3.8. Industry Vertical

- 20.3.9. Country

- 20.3.9.1. Brazil

- 20.3.9.2. Argentina

- 20.3.9.3. Rest of South America

- 20.4. Brazil Natural Language Processing (NLP) Platforms Market

- 20.4.1. Country Segmental Analysis

- 20.4.2. Component

- 20.4.3. Deployment Mode

- 20.4.4. Technology

- 20.4.5. Functionality

- 20.4.6. Integration

- 20.4.7. Organization Size

- 20.4.8. Application/ Use Case

- 20.4.9. Industry Vertical

- 20.5. Argentina Natural Language Processing (NLP) Platforms Market

- 20.5.1. Country Segmental Analysis

- 20.5.2. Component

- 20.5.3. Deployment Mode

- 20.5.4. Technology

- 20.5.5. Functionality

- 20.5.6. Integration

- 20.5.7. Organization Size

- 20.5.8. Application/ Use Case

- 20.5.9. Industry Vertical

- 20.6. Rest of South America Natural Language Processing (NLP) Platforms Market

- 20.6.1. Country Segmental Analysis

- 20.6.2. Component

- 20.6.3. Deployment Mode

- 20.6.4. Technology

- 20.6.5. Functionality

- 20.6.6. Integration

- 20.6.7. Organization Size

- 20.6.8. Application/ Use Case

- 20.6.9. Industry Vertical

- 21. Key Players/ Company Profile

- 21.1. AI21 Labs

- 21.1.1. Company Details/ Overview

- 21.1.2. Company Financials

- 21.1.3. Key Customers and Competitors

- 21.1.4. Business/ Industry Portfolio

- 21.1.5. Product Portfolio/ Specification Details

- 21.1.6. Pricing Data

- 21.1.7. Strategic Overview

- 21.1.8. Recent Developments

- 21.2. Alibaba Cloud

- 21.3. Amazon Web Services

- 21.4. Anthropic

- 21.5. Baidu

- 21.6. Cohere

- 21.7. DataRobot

- 21.8. Deepgram/ AssemblyAI

- 21.9. Google

- 21.10. Hugging Face

- 21.11. IBM (Watson)

- 21.12. Microsoft

- 21.13. OpenAI

- 21.14. Oracle

- 21.15. Rasa

- 21.16. Salesforce

- 21.17. SAP

- 21.18. SAS

- 21.19. Sogou/ iFLYTEK

- 21.20. Tencent Cloud

- 21.1. AI21 Labs

Note* - This is just tentative list of players. While providing the report, we will cover more number of players based on their revenue and share for each geography

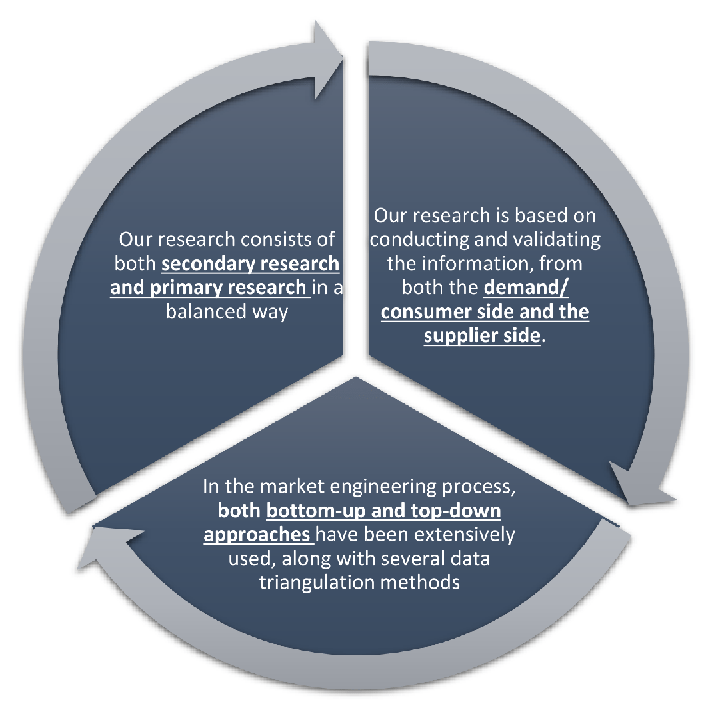

Research Design

Our research design integrates both demand-side and supply-side analysis through a balanced combination of primary and secondary research methodologies. By utilizing both bottom-up and top-down approaches alongside rigorous data triangulation methods, we deliver robust market intelligence that supports strategic decision-making.

MarketGenics' comprehensive research design framework ensures the delivery of accurate, reliable, and actionable market intelligence. Through the integration of multiple research approaches, rigorous validation processes, and expert analysis, we provide our clients with the insights needed to make informed strategic decisions and capitalize on market opportunities.

MarketGenics leverages a dedicated industry panel of experts and a comprehensive suite of paid databases to effectively collect, consolidate, and analyze market intelligence.

Our approach has consistently proven to be reliable and effective in generating accurate market insights, identifying key industry trends, and uncovering emerging business opportunities.

Through both primary and secondary research, we capture and analyze critical company-level data such as manufacturing footprints, including technical centers, R&D facilities, sales offices, and headquarters.

Our expert panel further enhances our ability to estimate market size for specific brands based on validated field-level intelligence.

Our data mining techniques incorporate both parametric and non-parametric methods, allowing for structured data collection, sorting, processing, and cleaning.

Demand projections are derived from large-scale data sets analyzed through proprietary algorithms, culminating in robust and reliable market sizing.

Research Approach

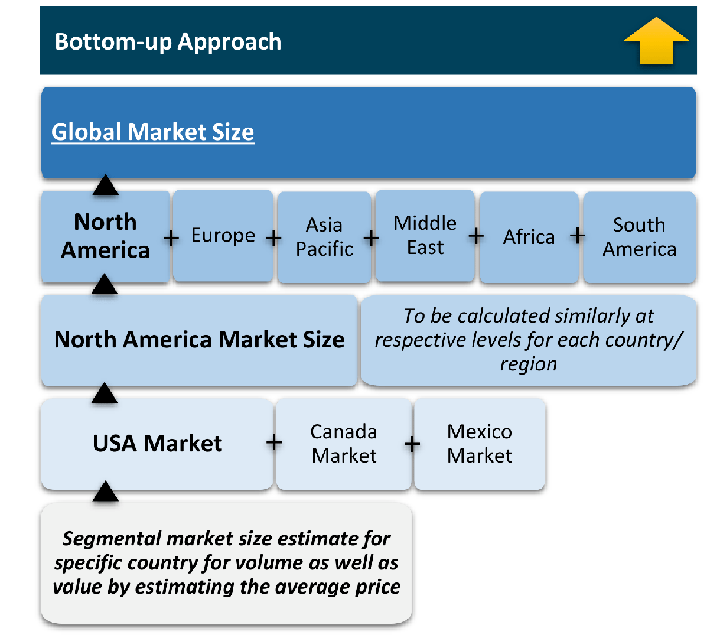

The bottom-up approach builds market estimates by starting with the smallest addressable market units and systematically aggregating them to create comprehensive market size projections.

This method begins with specific, granular data points and builds upward to create the complete market landscape.

Customer Analysis → Segmental Analysis → Geographical Analysis

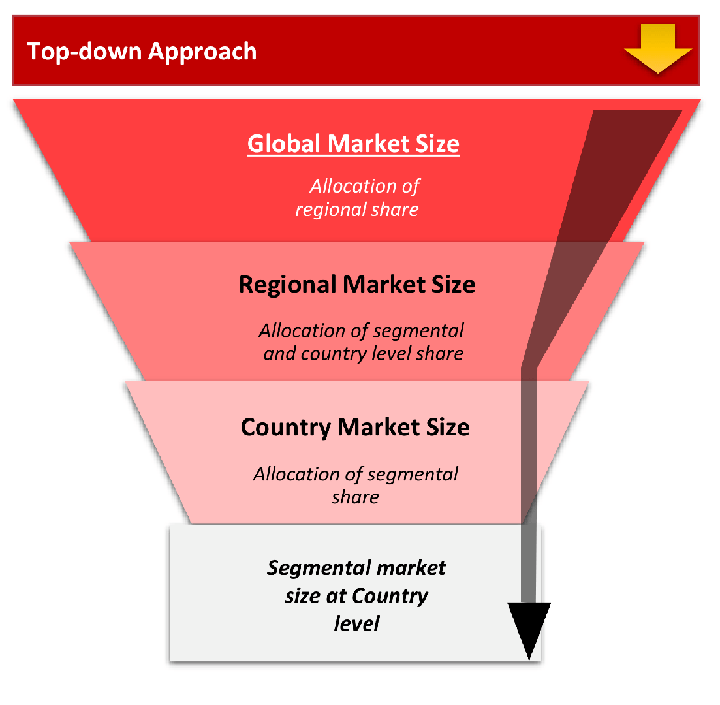

The top-down approach starts with the broadest possible market data and systematically narrows it down through a series of filters and assumptions to arrive at specific market segments or opportunities.

This method begins with the big picture and works downward to increasingly specific market slices.

TAM → SAM → SOM

Research Methods

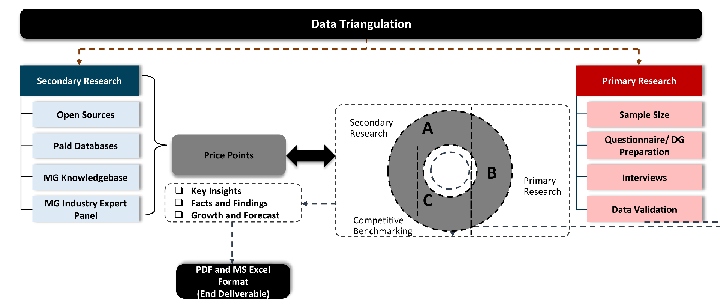

Desk / Secondary Research

While analysing the market, we extensively study secondary sources, directories, and databases to identify and collect information useful for this technical, market-oriented, and commercial report. Secondary sources that we utilize are not only the public sources, but it is a combination of Open Source, Associations, Paid Databases, MG Repository & Knowledgebase, and others.

- Company websites, annual reports, financial reports, broker reports, and investor presentations

- National government documents, statistical databases and reports

- News articles, press releases and web-casts specific to the companies operating in the market, Magazines, reports, and others

- We gather information from commercial data sources for deriving company specific data such as segmental revenue, share for geography, product revenue, and others

- Internal and external proprietary databases (industry-specific), relevant patent, and regulatory databases

- Governing Bodies, Government Organizations

- Relevant Authorities, Country-specific Associations for Industries

We also employ the model mapping approach to estimate the product level market data through the players' product portfolio

Primary Research

Primary research/ interviews is vital in analyzing the market. Most of the cases involves paid primary interviews. Primary sources include primary interviews through e-mail interactions, telephonic interviews, surveys as well as face-to-face interviews with the different stakeholders across the value chain including several industry experts.

| Type of Respondents | Number of Primaries |

|---|---|

| Tier 2/3 Suppliers | ~20 |

| Tier 1 Suppliers | ~25 |

| End-users | ~25 |

| Industry Expert/ Panel/ Consultant | ~30 |

| Total | ~100 |

MG Knowledgebase

• Repository of industry blog, newsletter and case studies

• Online platform covering detailed market reports, and company profiles

Forecasting Factors and Models

Forecasting Factors

- Historical Trends – Past market patterns, cycles, and major events that shaped how markets behave over time. Understanding past trends helps predict future behavior.

- Industry Factors – Specific characteristics of the industry like structure, regulations, and innovation cycles that affect market dynamics.

- Macroeconomic Factors – Economic conditions like GDP growth, inflation, and employment rates that affect how much money people have to spend.

- Demographic Factors – Population characteristics like age, income, and location that determine who can buy your product.

- Technology Factors – How quickly people adopt new technology and how much technology infrastructure exists.

- Regulatory Factors – Government rules, laws, and policies that can help or restrict market growth.

- Competitive Factors – Analyzing competition structure such as degree of competition and bargaining power of buyers and suppliers.

Forecasting Models / Techniques

Multiple Regression Analysis

- Identify and quantify factors that drive market changes

- Statistical modeling to establish relationships between market drivers and outcomes

Time Series Analysis – Seasonal Patterns

- Understand regular cyclical patterns in market demand

- Advanced statistical techniques to separate trend, seasonal, and irregular components

Time Series Analysis – Trend Analysis

- Identify underlying market growth patterns and momentum

- Statistical analysis of historical data to project future trends

Expert Opinion – Expert Interviews

- Gather deep industry insights and contextual understanding

- In-depth interviews with key industry stakeholders

Multi-Scenario Development

- Prepare for uncertainty by modeling different possible futures

- Creating optimistic, pessimistic, and most likely scenarios

Time Series Analysis – Moving Averages

- Sophisticated forecasting for complex time series data

- Auto-regressive integrated moving average models with seasonal components

Econometric Models

- Apply economic theory to market forecasting

- Sophisticated economic models that account for market interactions

Expert Opinion – Delphi Method

- Harness collective wisdom of industry experts

- Structured, multi-round expert consultation process

Monte Carlo Simulation

- Quantify uncertainty and probability distributions

- Thousands of simulations with varying input parameters

Research Analysis

Our research framework is built upon the fundamental principle of validating market intelligence from both demand and supply perspectives. This dual-sided approach ensures comprehensive market understanding and reduces the risk of single-source bias.

Demand-Side Analysis: We understand end-user/application behavior, preferences, and market needs along with the penetration of the product for specific application.

Supply-Side Analysis: We estimate overall market revenue, analyze the segmental share along with industry capacity, competitive landscape, and market structure.

Validation & Evaluation

Data triangulation is a validation technique that uses multiple methods, sources, or perspectives to examine the same research question, thereby increasing the credibility and reliability of research findings. In market research, triangulation serves as a quality assurance mechanism that helps identify and minimize bias, validate assumptions, and ensure accuracy in market estimates.

- Data Source Triangulation – Using multiple data sources to examine the same phenomenon

- Methodological Triangulation – Using multiple research methods to study the same research question

- Investigator Triangulation – Using multiple researchers or analysts to examine the same data

- Theoretical Triangulation – Using multiple theoretical perspectives to interpret the same data

Custom Market Research Services

We will customise the research for you, in case the report listed above does not meet your requirements.

Get 10% Free Customisation